We often speak of black hat SEO tactics and content scraping sites are just one example of such tactics. Scraping is the act of copying all content from a website using automated scripts, usually with the intention of stealing content or completely cloning the victim’s site. Lately we have been seeing quite a high number of clients affected by these so-called scraper sites. We’ll take a look at this kind of attack in an advanced form that results in the cloned site showing up in search results in place of the original site. These plagiarized sites abuse the way Google ranks content by sending fake organic traffic and by modifying internal backlinks on the cloned website so they no longer point to the victim’s website.

How Search Results Rank Website Content

Search engines want to return the best and most relevant pages in their search results to ensure that users have the best experience and find what they are looking for. As such, pages with the same or similar content on more than one page, or more than one site are not likely to rank high in the search results. One of the factors they take into consideration is the site’s organic traffic performance. This helps determine where that site should be ranked. In addition to many other factors, Google uses redirects to track which results the searcher clicks on within the search engine results page (SERP), and whether the searcher returns to click other results because they did not find what they were looking for.

As per study by Chitika in 2013:

Sites listed on the first Google search results page generate 92% of all traffic from an average search.

It makes sense that any kind of SEO targeting attack aims to get the best results they can within Google Search results can so that their activity can be successful and generate as much revenue as possible, or simply damage the SEO of the targeted website.

Signs of Being Affected by Scraper Sites

Content scraping tactics allow attackers to abuse the relationship your website has with search engines by copying your content and making it so that they are unable to determine which is the authoritative source. The worst part of this kind of attack is that you only notice it when it’s already too late – either when your search engine results page (SERP) rankings drop or you see other websites on the results page that are not yours.

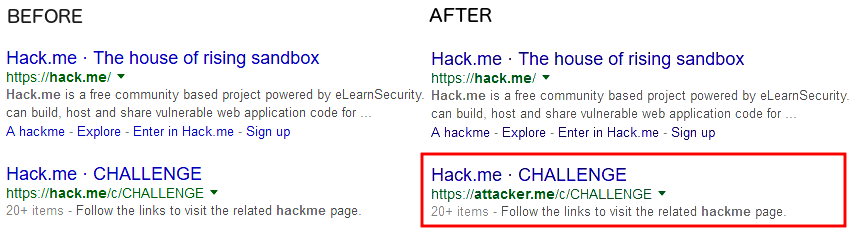

I created scenario to demonstrate this attack for better understanding. Let’s assume that the victim’s website is hack.me and attacker.me is the cloned website.

Before and after the attack:

In this image we see that the attacker has effectively stolen the original website’s ranking within Google search.

An important step in knowing how to better handle this is to identify how exactly the content is being stolen:

- If changing content on your website immediately changes the content on the cloned website this means that it’s an automated script running.

- If changing the content on your website makes no difference on the other website then it means that the data is already stored.

I’ll detail below why this is important.

How Websites Get Scraped

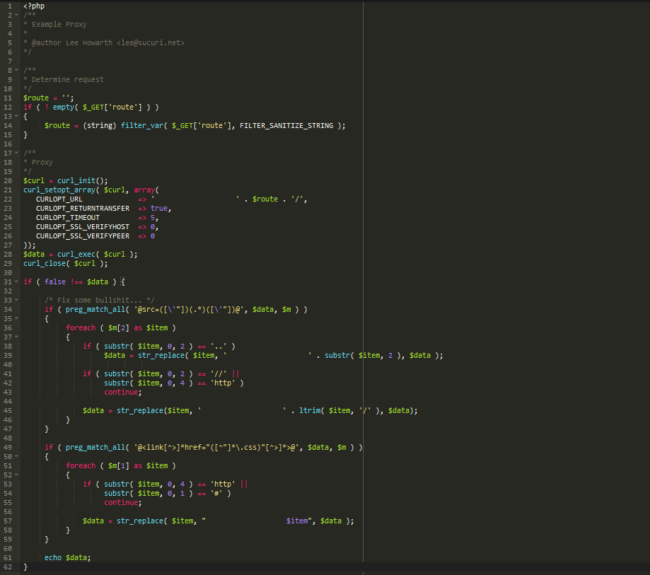

To demonstrate an example of how this attack happens, we can look at a script one of our developers put together (Lee Howarth):

This is all that it takes, in terms of code, to grab all the content from a website and still keep it functioning. It can be made even simpler than that because, to Googlebot, the site doesn’t need to look neat. All it needs is to have the same contents and assets.

Now that the code is ready, the next step is to generate as many hits as possible on the cloned website within Google. What this will do is increase the priority of that website in Google’s eyes. Once the number of hits gets high enough (among other various factors) the copied website’s search results will start to replace the victim’s site. To get the required hits, attackers make partial use of the rank that the attacker’s site already has then they get a bit more by posting the copied pages across their network of attack websites or even by making use of click farms (companies with low-paid workers used for fraudulent activities to generate clicks for SEO or revenue increase).

Once they succeed in stealing your results, they can make sudden changes to the site for any kind of malicious / malware-serving purpose or even just to feed their ongoing spam campaigns

Has My Site Been Compromised?

No.

This part is sometimes hard to understand because your site is being affected but not compromised. There is no need for a compromise for this kind of attack to work. The website that is stealing your results is the one that is compromised.

How to Fight Back

Let’s take a look at a few ways that we have to prevent and/or fix this.

- Make use of the rel=canonical tag within each page. This is a tag that tells the Search Index crawler bots which domain that the content actually belongs to. To better explain this, read this detailed article on rel=canonical by Yoast. This is something that most SEO plugins and practitioners should already add by default.

- Contact the owner of the compromised website. As I referenced above, someone else’s website is being used to attack your website, as such it’s a good idea to get in touch with them either through WHOIS information, or by social media like Twitter. Most websites nowadays include social media information directly on their homepage so it should be fairly easy to contact someone to inform them that they have been compromised and request they get the environment secured. (And it always feels good to be a good Samaritan no?)

- Find the WHOIS information for the cloned site. You can look up WHOIS information for the cloned site or make use of WHOIS services to find out who is hosting the cloned website. Get in touch with their abuse department or live support if available, and inform them of the event and request that it be stopped. If the site is using a CDN or a Web Application Firewall (WAF) then don’t hesitate to contact those vendors as well, so that they can forward the request on to the hosting provider or take direct action themselves.

- Set-up a Google alert. You can get Google to alert you if any sites publish an exact match to a title of your posts. It should alert you the moment your content is being stolen which is great, as its free and allows you to stop the issue before it becomes problematic.

- Block requests from the cloned site. By identifying the IP of the cloned site, you can request that your hosting provider block all requests from that IP. An easy way of achieving this is by adding a few lines to your .htaccess. Let’s say that the cloned site has the IP 192.168.190.190, you could add this to your .htaccess:

order allow,deny deny from 192.168.190.190 allow from all

- Report copied content to Google. Once you have identified your copied content, go to Google DMCA page or visit this direct link to the global form, and select Web Search. Be sure to fill everything out appropriately so you have all the nefarious links removed and your traffic returned within a couple of days

If it’s an automated script that is making a direct copy of the contents, simply blocking the cloned site’s IP should resolve the issue of the content being stolen, but it won’t instantly return your results and traffic. This is a good enough solution if you are short on time or the ranking hit wasn’t significant.

If your content data is already stored on the website then you should really try all the options to get the issue resolved as soon as possible.

Prevent Your Website SEO From Being Stolen

There is no 100% guaranteed way to stop content scrapers. Like most hackers and black hats, they will always find a way to get around any protection you put in place. There are many services like Grammarly and Copyscape which you can use to find copied content from your site. Or you can simply pick up a line from one of your posts and do a Google search with quotes (“line to look for”) and it should find all copied content if it was already indexed by Google.

The thought of being the target of an SEO attack shouldn’t leave you feeling vulnerable. It should encourage you to do regular checks and improve your security posture. There are a number of ways to eliminate a page from the SERPs, as detailed above.

If you do operate in a competitive sector it’s best to be proactive. Regular content reviews and duplicate checks either internal or external should be a part of your SEO strategy.

23 comments

“making it so that they are unable to determine which is the authoritative source.”

Matt Cutts said years ago that Google was more than on top of this nonsense.

I wouldn’t be so sure about that. Matt Cutts is great and all, but he hasn’t run the web spam team in months (…which is eons in tech-time). Looks like even a former Googler can’t get web spam removed quickly using manual actions – according to this recent article: https://www.seroundtable.com/former-googler-reports-spam-in-google-21921.html

Well that’ sort of my point. Google always says one thing about spam clouding their results, and the rest of us seeing something else.

Clearly spamming still works – they are using the same spam techniques as years ago.

I had this issue once and saw it a few times on other websites, and each time, the IP of the cloned website was actually not the IP used by the script (the reverse proxy) used to scrap the content. I had to dig in the Apache access log file to figure out which IP had to be blocked.

Hi,

Im currently having this same problem and would like to know how you managed to solve this issue?

You’ve got to figure out from your Apache log the IP address of the reverse proxy. Watch your Apache log live, access a page seldom visited through the mirror URL, you’ll see the IP address of the reverse procy accessing your website to fetch the content of this page. You just have to block it.

Found It, thanks.

So would this mean that the owner of true content on the cloned website either knows who has stolen your rankings or have actually had a hand in it themselves? I’m still kind of missing the point of why they would do this unless they were a competitor of yours or a SEO company that worked for a competitor of yours. How does it benefit anyone else?

The victim or original content producer doesn’t know. The cloner has some black hat agenda… could be anything. At worst maybe malware distribution or phishing of the stolen visitors from organic results…. or there may be a longer black hat game plan, ie. private blog networks, etc.

Yeah thats what I mean. The victim doesn’t know but I just don’t see the point in all of it unless the people that steal your your rankings and copy your site are somehow in cahoots with one of your competitors.

True it could just be malware or if you have a retail website they ould be phishing for cc details.

This is awesome and helpful article. Thanks for sharing.

thanks for the information

Thanks for the information

Hi Cesar. What we need is for the legit companies involved in this – the domain registrar, for example, to stop pointing at the site. If they refuse, they are complicit in making it easy to get to that stolen content. Maybe they like these thieves who register so many domains. I am deeply disappointed in my registrar for refusing to take action.

Is there any easy way to make a list of every URL on your site? I get the feeling that when you report it to Google you have to have all the URLs they copied – and if they cloned an older site that is hundreds of urls.

For anyone else in this boat, the way to get a list of every URL on your site is to have an SEO friend run a report in Screaming Frog.

I used my sitemap to generate the urls. It’s 1000 per day (24 hours) for the DMCA report. Yep, it can take a few days for a big site but it’s well worth the wait if the cloned urls are removed from search.

They aren’t actually totally removed – or at least they weren’t in my case. But at least they’re hidden unless someone wants to open them. EXCEPT for the home page of the cloned site. It is ranking and indexed and I’ve reported it TWICE to no avail.

The procedure I use:

1. Immediately disavow the offending domain in your disavow file (there may be links pointing to your legit site that will look suspicious to googlebot.)

2. Do a whois search for the domain owner and email a takedown notice. If the whois details are private, find out who is hosting the site and send them a DMCA takedown notice. However, DMCA is a US Act so is only applicable to US sites/domains: in other cases quote the relevant country’s Copyright Act.

3. File a DMCA report to Google (HQ is in Mountain View, CA, so they have to abide by DMCA) to remove all urls from search. The results, as you are aware can be a bit hit-and-miss, but keep trying.

This is purely damage-limitation and the best that can be done at the moment … I think. Prevention it is the best solution, but the combination of browser technology, scraper software and unscrupulous people make this a very difficult task.

Fast notification would be good: perhaps set up a google alert for a few of your exact page titles?

Thank you. I had not thought about the disavow issue. I better get on that. Ugh. The cloners are known malware purveyors in another country, so no help there. I have filed DMCA reports with Google. They removed internal pages, but I have twice filed on the home page of the cloned site and that one is still in the search results.

The way I knew about the clone the day the put it up was that I had tje infolinks plugin on my site (not activated). When they ed it that got turned on and infolinks emailed to say they added that domain to my account. I can see how much traffic they get from inside my infolinks account. And I turned on every possible ad hoping anyone who knows me would realize they were on the wrong site.

I tried to get infolinks to put “Content on this site is stolen” or something similar on the clone, but the person I messaged probably did not understand that they can definitely do that. They could even offer that as a reason to use infolinks. I got the idea from another site they cloned who had a way to put a pop-up on the cloned version.

I can’t understand why Google haven’t de-indexed the cloned homepage, indeed the whole domain. This must be so frustrating. With regard to the Infolinks ad’s, do they have your Publisher ID showing in the scripts on the cloned website? If so, I guess you will receive any revenue that’s generated … not a solution to the problem but a small consolation I suppose.

I don’t know, either. I thought maybe the first time was a glitch, but I submitted it again and still they didn’t de-index it.

Yes, the Infolinks are my Publisher ID, but they’ve only made 23 cents or something like that. The way I knew about the clone was that Infolinks emailed me that they added that site to my account.

I turned on all the ads to warn off anyone who might accidentally end up on the clone. Anyone who knows me would know I don’t run many ads and then hopefully notice how old the to post is and not end up infected or whatever that malware company is doing with that site.

Not really seeing a solution here, but nice to at least see someone writing about it.

Not sure how rel=canonical would matter at all. One of my sites has been recently scraped and shows up on the .net version of my domain. They used a script that simply replaced .com with .net for all pages… obviously including the rel=canonical – making it useless.

Our site is strong and aged, but shocked to see that this scrape job is actually picking up steam and starting to rank for some key terms (seeing it in Analytics as they didn’t even bother to replace our tracking codes).

I’m worried that Google DMCA takedown is all you can hope for as no one with any sense would scrape and publish an entire website without knowing there is no way to track them down. Disappointed again with Google on this one.

I recently have one of my sites cloned several times and it’s very frustrating to try remove them from the SERPS because (among other things) Google report spam form does not work. I have reported cloned sites at six months ago and I still see them indexed by Google.

So, I start thinking ways to fight back this on my on since they don’t remove those sites… I have tested some (in private) and as for the other’s, I would like your opinion’s.

The first thing I did was to find on my access logs (it was hard because they use Cloudflare), the IP from clone site and I block them on my server. The result of this is that the site doesn’t clone any more. But… they still have my titles and descriptions indexed under their domain…

The second solution I was thinking to implement is a small php script on my header to intercept requests from that specific IP and redirect them to other URL

The third solution is try to reclaim the spam site by putting a google verification tag on my site temporally on my site, validate him and ask the URL’s removal.

What do you think about this? Have anyone tried any from the above? Do you find other ways?

Comments are closed.